What Happened

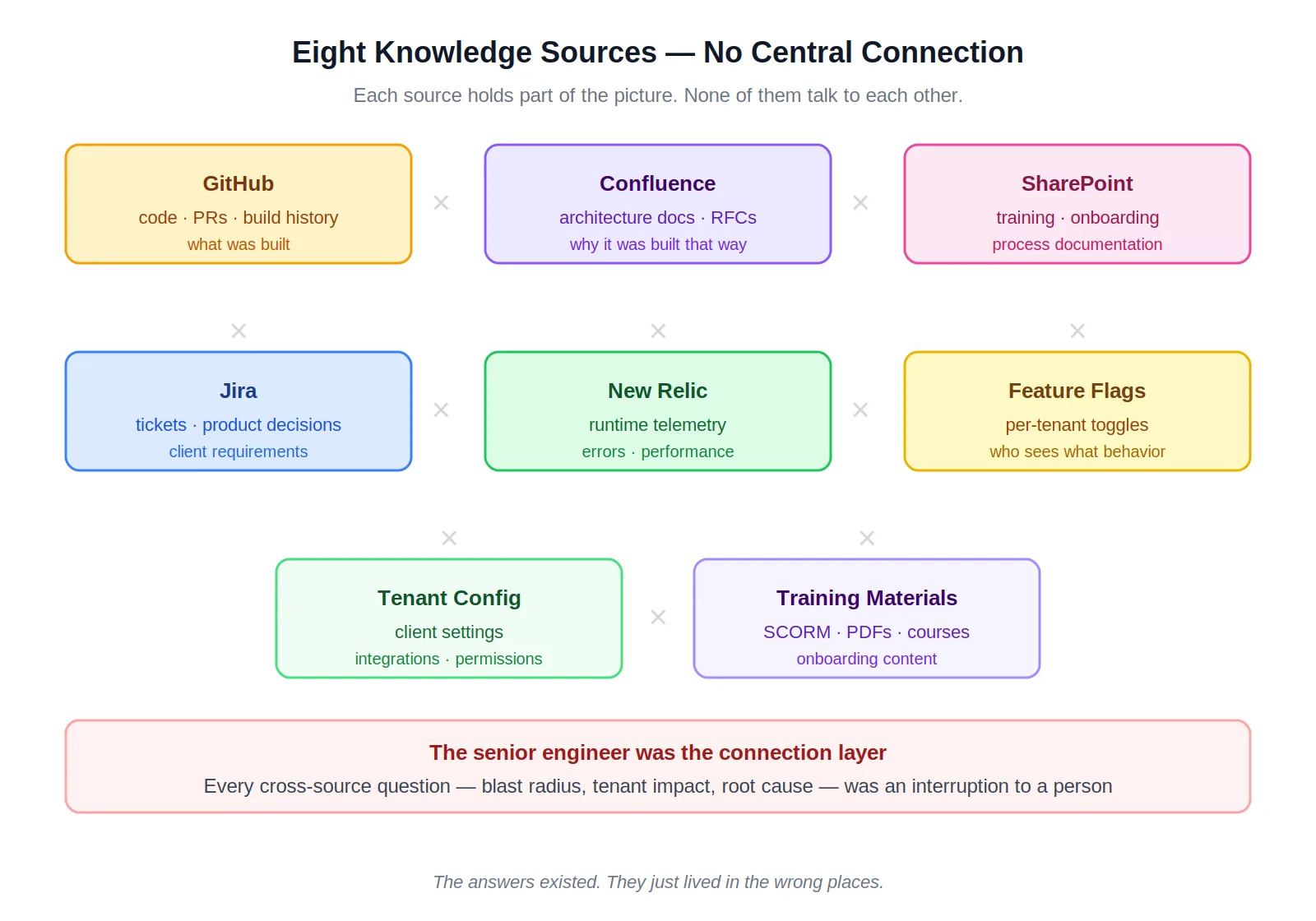

The knowledge problem on a mature platform isn't usually that documentation doesn't exist. It's that it lives in six places, none of them cross-referenced, and the relationship between them only exists in the heads of the people who built it.

Confluence held the architecture decisions. GitHub held the implementation history. SharePoint held training materials. Jira held the product reasoning and client-specific decisions. New Relic held the runtime behavior. Feature flag definitions and tenant configuration were scattered across systems that only senior engineers fully understood.

The result was a specific and recurring failure mode. When a production bug surfaced, tracing which recent PRs, merges, or GitHub Actions builds might have caused it required manually correlating across four tools. When a new feature was being scoped, understanding which tenants would be affected — and how their configurations would interact with the change — required asking someone who'd been there long enough to know. AI tools hit the same ceiling. They could reason about the implementation. They couldn't reason about the product decisions, client configurations, and operational context that explained why the implementation looked the way it did. Code alone is half the picture.

How It Was Addressed

The goal was a RAG system that indexed every knowledge source and exposed the full corpus to AI clients through a standard interface — so that questions like "what recent changes could have caused this production issue" or "which tenants does this change affect" returned answers drawing from code, PRs, Jira history, and tenant configuration simultaneously.

I built this as a solo project across five .NET components: a shared Core library, a CLI Indexer, a background Worker, an Admin web UI, and an MCP Server exposing 17 tools to AI clients.

The hardest decision was local embeddings over OpenAI's hosted API. The compliance requirements were unambiguous: confidential information and PII could not leave the network. OpenAI's embedding API was off the table. Rather than accepting lock-in to a single local provider, I built an abstraction layer — OllamaEmbeddingService and OpenAiEmbeddingService sitting behind the same interface, swappable via config. The system runs fully on-premises, fully on Azure OpenAI, or split across both. Compliance requirements drove the decision. The abstraction made it livable. The tradeoffs of running local embeddings in production are real and worth understanding before committing to the approach.

The Solution

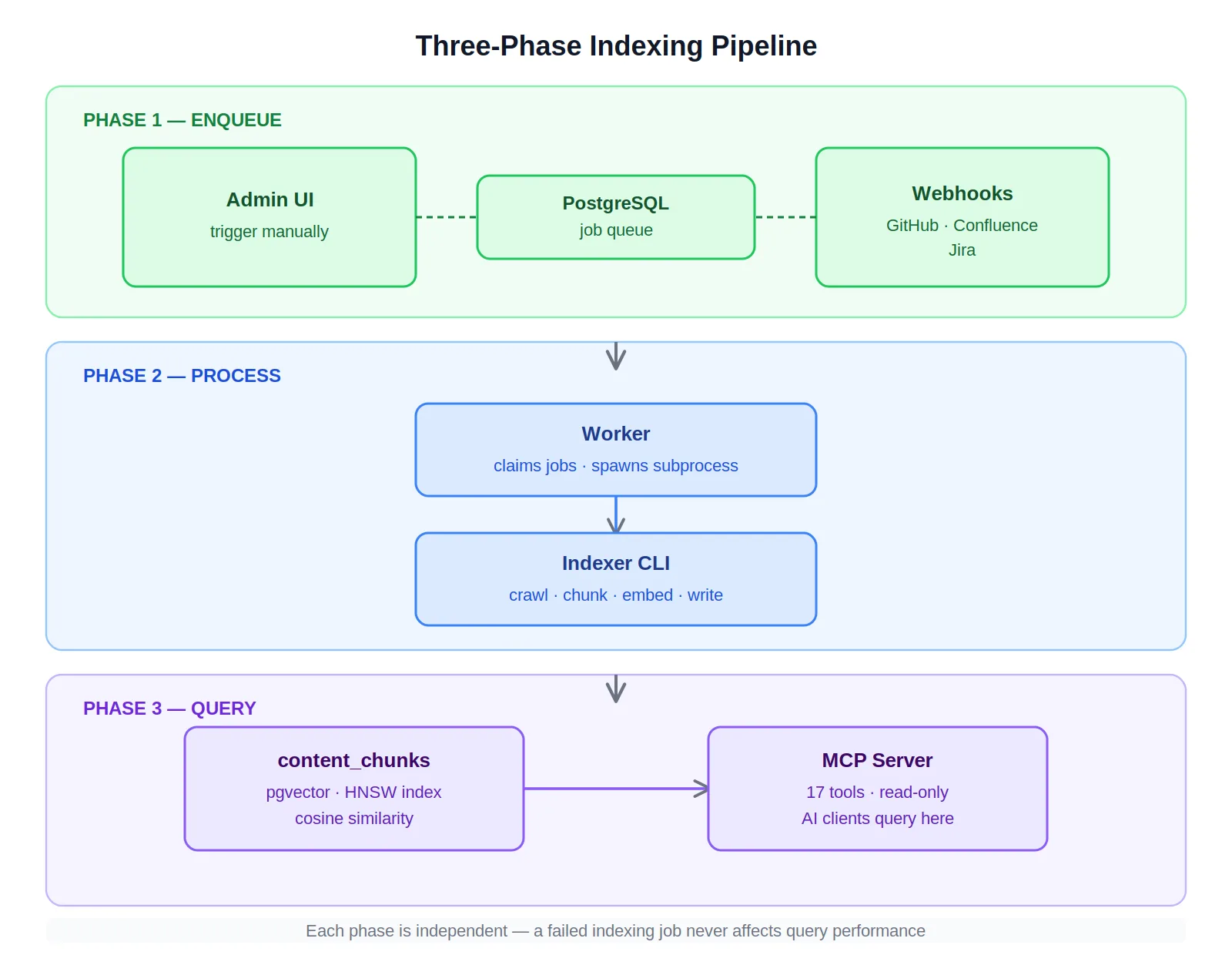

The system runs as a three-phase pipeline — and separating those three phases cleanly is the decision that made everything else easier to build and operate.

Phase 1 — Enqueueing. Indexing is triggered from the Admin UI or by inbound webhooks from GitHub, Confluence, and Jira. Each trigger writes a job to the indexer_jobs queue in PostgreSQL. No work happens yet — just a durable record that work needs to happen, with a status lifecycle that survives restarts.

Phase 2 — Processing. The Worker claims jobs via SELECT … FOR UPDATE SKIP LOCKED, which provides horizontal scalability without a dedicated queue service. Each claimed job spawns the Indexer CLI as a subprocess. That isolation matters: a runaway indexing job — a malformed document, an export with encoding issues — crashes the subprocess, not the Worker. The Worker marks it failed, logs it to index_failures with exponential backoff retry, and claims the next job. One bad source doesn't block eight others.

Phase 3 — Querying. The MCP Server is read-only. It exposes semantic search, source-specific retrieval, schema lookup, and tenant configuration queries as tools that AI clients reason with — not an API they call. A slow query doesn't affect the indexing pipeline. An indexing run doesn't degrade query performance. The phases share a PostgreSQL database and nothing else.

That separation is what made the system operationally stable. Failures are contained. Scaling is targeted. Deploying a fix to the Worker doesn't require touching the MCP Server.

Eight sources, one interface. Each knowledge source is implemented as a plugin assembly discovered at runtime via PluginRegistry. Adding a source means writing a new assembly that implements IIndexerPlugin — not modifying Core, not changing the Worker, not updating the MCP Server. The plugin boundary is where the system's capacity to grow lives.

SHA256 incremental indexing. Content hashing means unchanged files aren't re-embedded on every run. Webhook receivers for GitHub, Confluence, and Jira trigger targeted re-indexing of only what changed — keeping the index current without a full rebuild on every commit.

The outcome was a system where engineers — and the AI tools they work with — could ask questions the codebase alone could never answer. Which customers does this change affect? What recent merges correlate with this production error? What was the product decision behind this design? Those questions now have answers.